Navigating convergence and divergence between the EU MDR and EU AI Act

Abstract This article is the first of two on the EU Artificial Intelligence Act (EU AI Act) and its interaction with the EU Medical Devices Regulation (EU MDR) for AI‑enabled medical devices. It examines the shifting regulatory terrain shaped by the EU MDR and the AI Act, highlighting points of convergence and divergence. It analyzes the EU AI Act’s horizontal framework and risk-based classification, demonstrating how they complement the EU MDR’s foundation in patient safety. Using practical examples and decision pathways, the discussion clarifies when an AI-enabled device is classified as high-risk and how to navigate overlapping rules while balancing innovation. The second article identifies key implementation gaps and proposes solutions on how manufacturers can use existing EU MDR processes and international standards to operationalize EU AI Act obligations.Keywords – AI system, EU AI Act, EU MDR, risk classification

Introduction

For more than half a century, artificial intelligence has operated alongside people,1 sorting emails, suggesting content, guiding searches, and making everyday technologies like Alexa run more smoothly. For a long time, most of this activity occurred quietly in the background. AI was present, but it was not something people noticed or discussed. That changed when generative AI tools became available to the public. People were no longer just hearing about AI; they were interacting with it directly. Everyone, from engineers to clinicians to policymakers, wanted to discuss adaptive algorithms, learning models, and the uncertain future of the human race.2 The underlying technology had existed for years, but widespread access brought awareness, and with greater awareness came new questions. AI has become pervasive across various sectors, particularly in healthcare, where diagnostic tools flag tumors, wearables monitor heart rhythms, robotic systems assist in surgery, and algorithms predict patient deterioration before it occurs.3 While each breakthrough offers the promise of improved outcomes, they also introduce new questions about bias, accountability, privacy, and the appropriate role of a machine in decision-making about human health.4 Questions persist around systems that continue to learn after deployment and how such technologies can remain reliable, fair, and accountable as they evolve.Related article Build from the base: Operationalizing the EU AI Act through a decision-tree approach

While the progress in AI has been relentless, its governance has lagged behind. Regulators often face the difficult task of keeping pace without stifling innovation.5 And for regulatory professionals, it can be challenging to determine which frameworks should be followed and which standards should be applied. These are not unfamiliar questions.6 Many regulatory and quality professionals remember the uncertainty that came with the EU MDR back in 2017. As the EU AI Act comes into effect, regulatory professionals may feel similar anxieties.

This is the first of two articles that will build on a presentation given by the authors at the RAPS Convergence conference in October 2025. It will explore how existing standards, upcoming standards, and risk classification can help regulatory and quality professionals interpret and apply the EU AI Act alongside the EU MDR.

Evolving risk frameworks in the era of artificial intelligence

The EU’s New Legislative Framework (NLF) provides a coordinated legislative structure that aligns multiple product regulations to support public safety across diverse sectors.7 Each regulation addresses a specific type of risk, from industrial hazards to patient protection. Within this structure, the EU MDR concentrates on clinical safety and performance while the EU AI Act introduces requirements for artificial intelligence systems, focusing on issues such as bias, transparency, and explainability. These instruments are designed to function together without conflict.

Under this structure, the EU MDR acts as lex specialis (the more specific rule), focusing on medical device safety and performance. The EU AI Act serves as lex generalis, a broader regulation that ensures AI systems of any kind operate in line with European values and fundamental rights.8 A key area where the EU MDR and AI Act intersect is risk management, which directly underpins how safety is defined and demonstrated. Regulators define safety as a condition in which the benefits of a device outweigh its risks. Patients, however, often view safety in absolute terms; they seek reassurance that a product will not expose them to harm.9 Yet every medical technology carries risk – from deep-brain stimulators to continuous glucose monitors to basic imaging algorithms. Regulatory approval, therefore, depends on whether the benefit outweighs the risk.10

AI introduces unique risks regulators have not had to quantify before, including bias in training data; opacity in decision-making; cybersecurity, privacy, and fundamental rights issues; and the need for human oversight.4 These risks do not conform to traditional safety and effectiveness frameworks, yet they directly affect trust in the healthcare system.11 The EU AI Act brings these considerations into focus, ensuring that innovation in AI never comes at the expense of fundamental rights.12

Given the complex nature of regulating AI applications within healthcare, this article examines how the EU MDR and AI Act interact and how existing quality and regulatory frameworks can be applied in a complementary manner. It provides a practical lens for contextualizing the EU AI Act within the established structure of medical device regulation, enabling professionals to align compliance strategies without reinventing existing systems. As per the Medical Device Coordination Group (MDCG) guidance on the interplay between the EU MDR, IVDR, and AI Act, it is clear that regulation is not being replaced; it is being refined to reflect the complexity and capability of modern AI systems.13

Purpose

This paper explores the intricate relationship between two landmark regulations, the EU MDR and the EU AI Act, and provides clarity on the evolving regulatory landscape for AI-enabled medical devices. Current industry analyses identify a clear gap in understanding the interrelation of these frameworks. The purpose of this first paper in the two-part series is twofold:

- To analyze the convergence and divergence between the EU MDR and AI Act; and

- To explain the evolving concept of risk under both frameworks, using practical examples.

Part two of this series will map the existing standards ecosystem to support EU AI Act compliance and will propose a call to action for regulatory and quality professionals.

The EU AI Act: An introduction

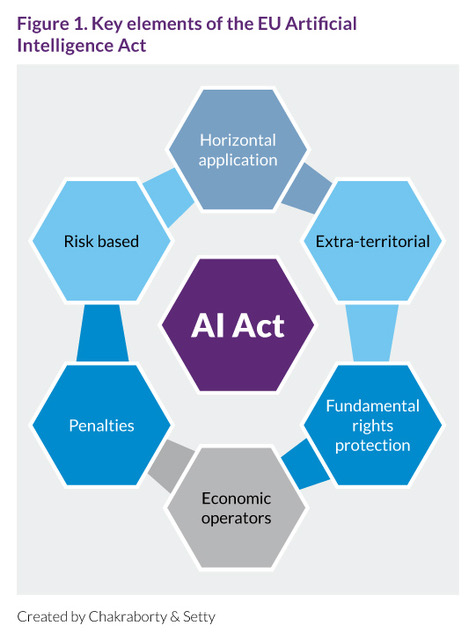

The EU AI Act was published in the Official Journal of the European Union in July 2024. Following a deliberate three-year process that began with its initial proposal in 2021, the act establishes a structured, risk-based framework for governing AI systems across Europe.14 At its core, the EU AI Act sorts technologies into categories based on the level of risk they pose – ranging from unacceptable practices that are banned, to high-risk systems facing the strictest oversight, through to minimal-risk applications that face lighter regulatory requirements (Figure 1). This approach balances innovation with protection, ensuring AI systems serve people without compromising safety or fundamental rights. The sections below outline the EU AI Act’s key elements and highlight their implications for medical device professionals.

The EU AI Act is a horizontal legislation

The EU AI Act represents the world’s first comprehensive horizontal legislative framework for artificial intelligence.15 Unlike sector-specific regulations such as the EU MDR, the EU AI Act extends across multiple sectors and applications from healthcare and finance to education, transport, and consumer products.16 Its horizontal nature establishes a unified set of principles and obligations for AI systems regardless of their domain, ensuring a consistent approach to trust, accountability, and human protection across the European internal market.

The EU AI Act is extraterritorial

A defining characteristic of the act is its extraterritorial scope, echoing the reach of the General Data Protection Regulation (GDPR).17 The requirements of the EU AI Act apply not only to entities established within the EU but also to any organization, regardless of geographic location, that places an AI system on the EU market, or whose system outputs are used within the EU. In practice, this means that providers, importers, distributors, and deployers of AI systems outside Europe must comply with EU AI Act requirements if their products or services reach EU users.18

The EU AI Act introduces economic operators

Just as the EU MDR clearly defines roles for manufacturers, importers, distributors, and users, the EU AI Act establishes a parallel set of economic operators with specific responsibilities: providers, deployers, importers, and distributors.16 An important distinction is that key terms differ between the EU MDR and EU AI Act. While the EU MDR uses manufacturer and end user, the EU AI Act instead refers to provider and deployer instead.13 Under the EU AI Act, economic operators are defined as:

- Providers, who develop or place an AI system on the market (e.g., manufacturers, software developers, and even some distributors);

- Deployers, who use AI systems under their authority (e.g., hospitals, clinics, insurance companies, labs, and sometimes healthcare professionals). It is important to note that deployers under the EU AI Act are not synonymous with users under the EU MDR. While the term users refers to the individuals who operate a medical device per EU MDR, deployers, as stated above, can represent entire organizations or entities that control and oversee the system’s use, extending beyond the scope of a single end user;13 and

- Importers and distributors, who ensure compliance and traceability across the supply chain.

Each of these actors carries distinct obligations related to transparency, technical documentation, data governance, human oversight, and postmarket monitoring. This structure ensures there are no gaps in responsibility and that every organization involved in developing, deploying, distributing, or using an AI system is accountable for how it performs and operates safely.

The EU AI Act is risk-based in its approach

At the structural level, the EU AI Act (Articles 5-15: Risk categories; Annexes I-III) adopts a risk-based regulatory model, distinguishing between four categories of AI applications: unacceptable risk, high risk, transparency-requiring AI systems, and minimal risk.16

Unacceptable risk. This category encompasses AI uses deemed incompatible with EU values – such as emotion recognition in healthcare settings – which are prohibited under Chapter II, Article 5 of the EU AI Act.

High risk. High-risk systems include those in which any error or bias could cause harm or discrimination, such as a diagnostic tool supporting image analysis, as referenced in Chapter III, Article 6. For example, unrepresentative training data can result in biased algorithms that could lead to misdiagnosis (e.g., under-detecting skin cancer in darker skin tones), resulting in harm due to delayed treatment or unnecessary interventions.

Transparency-requiring AI systems. This category is often referred to as limited risk, although that term does not explicitly appear anywhere in the EU AI Act. Under Chapter IV, and in particular Article 50, the act identifies certain AI uses that trigger explicit transparency duties when individuals interact with or are subject to the system. Typical examples include conversational or generative tools that could be mistaken for human interaction, wellness or coaching applications using AI, and systems performing emotion recognition or biometric categorization. In these cases, users must be clearly informed that they are engaging with an AI system, or that such analysis is taking place, so they can interpret outputs appropriately and make an informed decision about whether to continue using the system.

Importantly, transparency‑requiring AI systems do not constitute a separate risk tier in the same sense as unacceptable or high‑risk. A given system may be both high‑risk and transparency‑requiring if, for example, it supports clinical decisions and also interacts directly with patients. In such cases, the high‑risk obligations would apply in addition to Article 50 transparency duties. Conversely, systems that are neither prohibited nor high‑risk may still fall into this transparency‑requiring subset purely because of how they interact with people. For regulatory and quality professionals, this means that the informal shorthand limited risk can be misleading, as the legally meaningful question is whether a system falls under the transparency obligations and, if so, how those duties are implemented in design, labeling, and user communication.

Minimal risk. This category covers most everyday AI uses and is subject to voluntary codes of conduct rather than formal regulation (e.g., appointment or billing systems tools used in healthcare settings). This proportional approach allows the EU to tailor regulatory oversight according to the potential impact of the system on human welfare and rights. For the medical sector in particular, most AI systems used for diagnosis, monitoring, or therapeutic purposes fall within the high-risk category, meaning they must comply with the technical, organizational, and ethical safeguards outlined in Chapter III of the act.

Definition of an AI system

Before examining the detailed requirements and classification framework of the EU AI Act, it is important to understand how the regulation defines an AI system (Figure 2). Without this foundation, organizations risk applying controls out of context – either overregulating ordinary software or overlooking systems that warrant stronger oversight.

In the medical device field, AI is often equated with machine learning or neural networks. While machine learning/neural networks are a major part of many AI technologies, the EU AI Act uses a broader definition. The intention is to include not only data-driven models but also systems that use logic, rules, or knowledge-based reasoning to produce outcomes that influence decisions or actions.

Under Article 3(1), an AI system is defined as a machine-based system that is designed to operate with varying levels of autonomy, that may exhibit adaptiveness after deployment, and that, for explicit or implicit objectives, infers from the input it receives how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments.16

This definition of an AI system is closely tied to its function and level of autonomy, both of which correlate with risk. A system that predicts is distinct from one that recommends or decides. For instance, a predictive system, such as a wearable device that alerts a user to possible high blood pressure, forecasts an outcome. However, the user or clinician still makes the final judgment. A recommendation system goes further; it interprets findings and suggests a specific next step, as with an AI tool that analyzes a CT scan and recommends that a radiologist review a potentially malignant lesion. At the highest level of autonomy are decision systems that act without human intervention. A closed-loop insulin pump that autonomously adjusts insulin levels based on glucose readings illustrates this category, which typically carries the highest inherent risk.

The key point is that under the EU AI Act, AI is defined by what it does rather than how complex it is. Any system that can infer from data and act, even partly on its own, falls under this definition. This functional approach helps ensure that both new and existing medical technologies are evaluated consistently, regardless of the technical method used to build them.

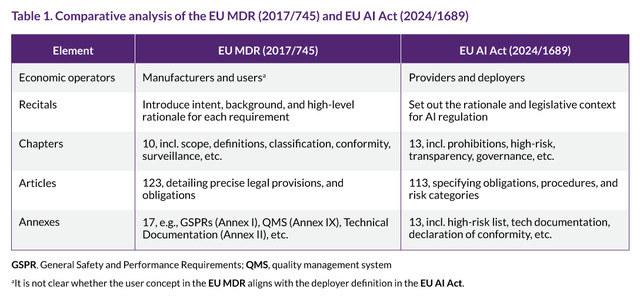

General structure of the EU AI Act

Structurally, the EU AI Act mirrors the EU MDR. This is not surprising, as both the EU AI Act and EU MDR are part of the NLF, as noted in Recital 87 of the EU AI Act.16 The shared architecture allows regulators, manufacturers, and conformity assessment bodies to apply a familiar framework while addressing AI-specific challenges. Table 1 offers a side-by-side comparison of how the EU AI Act and EU MDR align in their structure. For professionals experienced in navigating the EU MDR, the EU AI Act may feel like a new building constructed on the same blueprint – recognizable in structure but presenting new regulatory terrain.

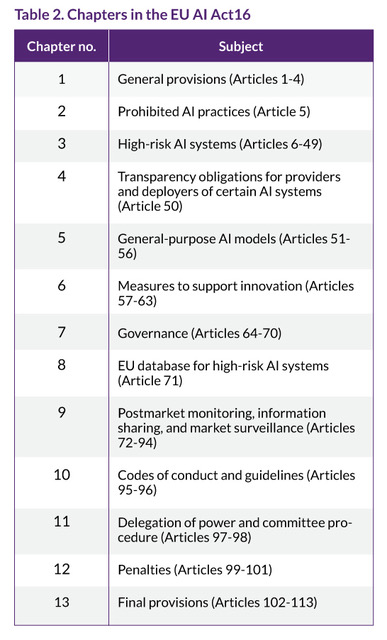

At a high level, the EU AI Act comprises 13 chapters and 13 annexes, totaling 113 articles. The first four chapters set the foundation: general provisions, prohibited AI practices, requirements for high-risk AI systems, and transparency obligations for certain AI systems defined under Article 50. These are followed by chapters (Chapters I-IV: Articles 1-50) dedicated to addressing general-purpose AI models, governance, postmarket monitoring, and penalties. This progression mirrors the EU MDR’s lifecycle-based approach from development and conformity assessment through to surveillance and enforcement.16

Key structural highlights: Chapters 3, 4, 7, 8, and 9

Although the EU AI Act is vast, only a subset of chapters carries most of the regulatory weight for medical device manufacturers. Chapters 3, 4, 7, 8, and 9 define how AI systems are classified, governed, documented, and monitored across their lifecycle.Chapter 3 of the EU AI Act defines high-risk AI systems, particularly those integrated into medical devices subject to third-party conformity assessment under the EU MDR, requiring comprehensive risk management, data governance, human oversight, and technical documentation throughout the AI system's lifecycle. Chapter 4 emphasizes transparency requirements, including informing users that they are interacting with an AI system, labeling synthetic content, and providing explanations for AI-driven outputs. Chapters 7, 8, and 9 further complement this framework by covering general-purpose AI governance, postmarket monitoring, and enforcement mechanisms. Together, these chapters support continuous oversight, accountability, and alignment with EU-wide regulatory standards, particularly for medical device applications where AI may influence patient safety and clinical decision-making. For additional information on the specific chapters, refer to the EU AI Act.16 Table 2 outlines the subject of each chapter in the EU AI Act.

The annexes of the EU AI Act further specify requirements for high-risk systems, technical documentation, and conformity assessment. Annex I of the EU AI Act lists European Union harmonization legislation, including sectoral regulations such as the EU MDR, establishing the legal connection between the EU AI Act and existing product‑specific frameworks within NLF. Annex III lists AI systems that are automatically considered high risk, including those used in healthcare and critical infrastructure. Annex IV defines the documentation and evidence manufacturers must provide to demonstrate compliance, aligning closely with the EU MDR’s technical documentation requirements.16

A safety component is a new and important concept introduced by the EU AI Act. It is defined in Article 3(14) as “a component of a product or of an AI system which fulfils a safety function for that product or AI system, or the failure or malfunctioning of which endangers the health and safety of persons or property.” This moves safety considerations beyond the traditional focus on the finished product to include individual elements that play a critical role in preventing harm.16

Consider an AI-based predictive maintenance module used within a vision system on a medical device manufacturing line. The core vision system performs defect detection on products, while the predictive maintenance module tracks sensor data, vibration patterns, and camera calibration values to anticipate when the imaging hardware might fail or drift out of tolerance. If the module identifies early signs of wear or misalignment, it issues alerts so that maintenance can be performed before inspection quality is compromised. Although this module is not itself a medical device or accessory, its malfunction could delay maintenance, allow undetected inspection failures, and ultimately affect product safety. Under the EU AI Act, the module would be regarded as a safety component because its proper operation helps to prevent risks to health and safety. Recognizing and classifying such safety components within AI systems broadens the scope of product safety and risk management. It requires manufacturers to consider not only hardware functions, but also how supporting algorithms operate and how the data they rely on is monitored and controlled, as part of the overall safety profile of the product.

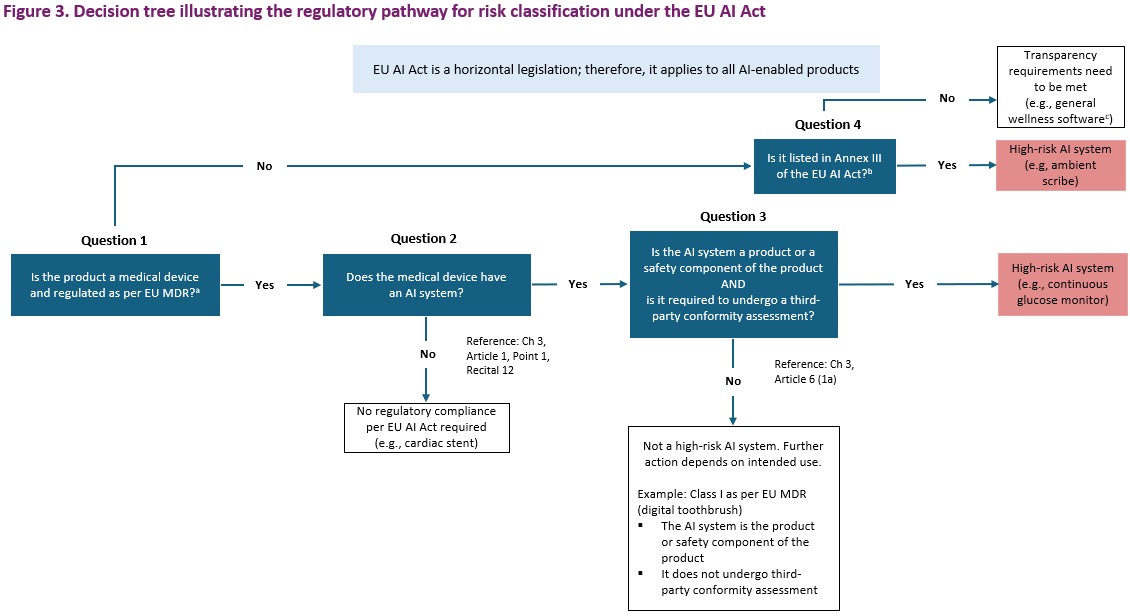

Risk classification as per EU AI Act

Both the EU AI Act and the EU MDR rely on risk-based classification, yet mapping the product risk classification between the two frameworks is not straightforward. The definitions, criteria, and obligations do not always align, leaving manufacturers with limited guidance on how to assess and classify AI-enabled medical devices.To help bridge this gap, the author(s) have created a risk classification flowchart (Figure 3) to map regulatory requirements to a given product based on an understanding of the EU AI Act and EU MDR. It is intended to guide regulatory, quality, and engineering teams in translating high-level legal requirements into concrete, operational decisions. By applying this framework, teams can determine whether a product qualifies as a medical device, whether it incorporates AI as defined under the act, and whether it falls into the high-risk category as per EU AI Act – all while aligning with existing EU MDR.

EU AI Act, EU Artificial Intelligence Act; EU MDR, EU Medical Devices Regulation

aAnnex I of the EU AI Act, Section A – List of Union harmonization legislation based on the new legislative framework (Point 11), references the EU MDR. bTypically, any item listed in Annex III of the EU AI Act is considered high-risk as per Annex III (5). However, exceptions can be claimed under Article 6, Part 3, which include the following: (i) the AI system does not materially influence the decision making or do not harm to the health, safety or fundamental rights of natural persons, including by not materially influencing the outcome of decision making; (ii) the AI system executes a narrow responsible task; and/or (iii) the AI system improves the result of previous human activities. CCurrently, an ambient scribe is not considered a medical device per EU MDR. If it processes clinical information and affects how medical data is interpreted or prioritized, it would be considered a high-risk AI system. However, if the system is defined as an AI-powered tool that performs verbatim voice-to-text transcription without drawing conclusions, summarizing content, or prompting actions, it contains AI within the product but qualifies for an exception under Annex III.

Created by Chakraborty & Setty

The decision tree in Figure 3 begins with Question 1, which asks whether a product qualifies as a medical device under the EU MDR definition of a medical product. Since Annex I of EU AI Act ,16 Section A (Point 11), references the EU MDR within its list of European Union harmonization legislation established under the NLF, it is a logical starting point for establishing a relationship between the EU AI Act and EU MDR. For greater context, Annex I lists various European Union laws that aim to standardize rules across member states. These laws cover a wide range of areas, including machinery, toy safety, recreational watercraft, lifts, equipment for potentially explosive atmospheres, radio equipment, pressure equipment, cableway installations, personal protective equipment, appliances burning gaseous fuels, medical devices, civil aviation security, two- or three-wheel vehicles, agricultural and forestry vehicles, marine equipment, rail system, motor vehicles and their trailers, and aircraft.16

If the product in question is not a medical device (No to Question 1), the pathway proceeds to Question 4 (the decision pathway for Question 4 is elucidated in a later section). If it is a medical device (Yes to Question 1), the pathway advances to Question 2, which asks whether the medical device has an AI system. The definition of an AI system is provided in Chapter 3, Article 1, Point 1, and Recital 12 of the EU AI Act.16 If no AI system is present, then the EU AI Act does not apply (No to Question 2). For example, a cardiac stent, which is classified as a Class III medical device under the EU MDR, is an implantable device with no AI systems in the product or as a safety component.

If the product incorporates an AI system (Yes to Question 2), proceed to Question 3, which asks whether the AI system is itself the product or a safety component of the product, and whether it is subject to third-party conformity assessment.16 If both conditions are met (Yes to Question 3), then the product is classified as a high-risk system as per Article 6(1)(a) of the EU AI Act, which states:

“an AI system shall be considered to be high-risk where both of the following conditions are fulfilled: (a) the AI system is intended to be used as a safety component of a product, or the AI system is itself a product, covered by the Union harmonisation legislation listed in Annex I; (b) the product whose safety component pursuant to point (a) is the AI system, or the AI system itself as a product, is required to undergo a third-party conformity assessment, with a view to the placing on the market or the putting into service of that product pursuant to the Union harmonisation legislation listed in Annex I.”16

An illustrative example of a medical device that is classified as a high-risk AI system is a continuous glucose monitor with an insulin pump. This is classified as a Class IIb device under the EU MDR and is therefore required to undergo a third-party conformity assessment. When a device uses AI, whether embedded in the product itself or functioning as a safety component supporting insulin delivery or hypoglycemia prediction, it takes on additional regulatory significance. Because the AI directly influences patient safety and the device undergoes third-party conformity assessment, the system would be considered a high-risk AI system under the EU AI Act and must comply with the rigorous obligations set forth in the EU AI Act, including risk management, data governance, and quality assurance requirements.

If these conditions are not met (No to Question 3), then it is not a high-risk AI system. For example, consider a digital toothbrush, which is a Class I device as per EU MDR and does not require third-party conformity assessment. Although a digital toothbrush could incorporate AI, because it does not satisfy both criteria, it would not be classified as a high-risk AI system as per the EU AI Act.

As discussed above, if the product in question is not a medical device, Question 4 would apply. Question 4 asks whether the product is listed in Annex III of the EU AI Act. If the product is not listed (No to Question 4), then transparency requirements must be met under Chapter IV, Article 50 of the EU AI Act .16 One illustrative example is general wellness software, which facilitates healthy living practices (e.g., exercise levels, step tracking, or calories burned) without claiming to diagnose, treat, or mitigate any medical conditions, recommend medical decisions, or influence clinical decision-making. Ultimately, the intended use is crucial to determining the risk and transparency classification. If the software makes medical claims or influences clinical decision-making, both the risk and transparency considerations may change.

If the product in question is listed in Annex III (Yes to Question 4), it would be considered a high-risk AI system. It is important to note that there are possible exceptions to Annex III based on intended use as per Article 6, Part 3 of the EU AI Act, which would render the product in question not to be considered as high risk. For example, an AI-based patient appointment triage system for hospital administration might be exempt if it “does not materially influence decision-making, executes a narrow task, or improves existing human activities.”16

The example of an ambient clinical scribe illustrates how small changes in intended use can shift an AI system’s classification under the EU AI Act. When the technology is limited to verbatim voice‑to‑text transcription – capturing spoken interactions and converting them into text without summarizing, interpreting, or suggesting actions – it typically performs a narrow, well‑controlled task. In this configuration, the product may fall within the exception for certain Annex III use cases, as it supports workflow efficiency without materially influencing clinical decisions or creating significant risks to health or fundamental rights (Article 6, Part 3 of EU AI Act).16

The picture changes when the same type of system is designed to extract clinically relevant information, generate structured notes, or feed data into electronic health records in ways that shape diagnosis, treatment, or triage. In these scenarios, the ambient scribe contributes directly to how clinical information is interpreted and prioritized and may influence when and how patients receive care. Such functionality corresponds to the high‑risk category in Annex III, Point 5(d) related to essential private and public services – specifically the provision covering AI systems that analyze or categorize emergency calls, support dispatch decisions, or set the priority for sending emergency responders such as police, fire, or medical services, including systems used for emergency‑care triage.16

In these cases, the system would be treated as a high‑risk AI system and would need to meet the corresponding obligations under the EU AI Act, including robust risk management, transparency, and oversight. This example highlights how subtle shifts in intended use can alter a product’s classification entirely, underscoring the importance of documenting intended purpose clearly and aligning it with the AI system’s actual functionality.

Risk classification in this article has so far focused on AI systems embedded in medical devices. However, the AI Act is a horizontal legislation that applies across sectors and products, not only to healthcare. This broader scope means organizations must look beyond clinical applications when assessing where the act applies.

For example, AI is used in human resources for tasks such as screening job candidates or supporting hiring decisions. These systems fall under the EU AI Act and are explicitly listed as high‑risk in Annex III because they can significantly affect access to employment and career progression (Annex III, Section 4(f), supported by Recital 57).16 This category includes AI tools used to target job advertisements, filter or rank applications, assess candidates, or monitor employee performance and behavior, all of which are subject to heightened safeguards under the EU AI Act’s high‑risk regime.

Therefore, organizations should not limit their focus to medical devices; they must also consider AI systems used in other processes, such as human resources. These systems carry substantial compliance obligations under the EU AI Act, including risk management, transparency, human oversight, and incident reporting. Adopting this broader perspective ensures comprehensive governance of AI systems across all organizational functions.

Mapping high-risk classification between the EU MDR and the EU AI Act

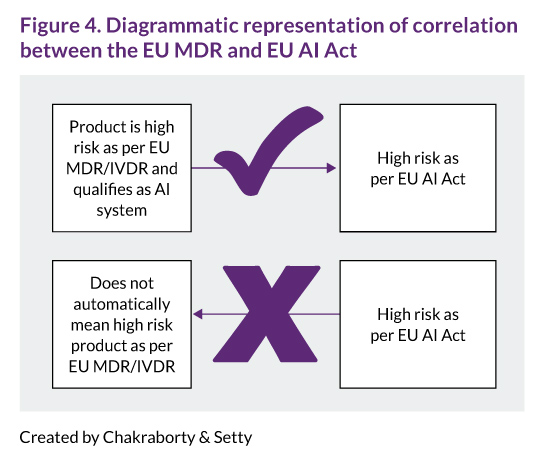

The relationship between EU MDR risk classes and the EU AI Act’s high‑risk definition is more nuanced than a simple one‑to‑one match. As clarified in Section 1(2) of the MDCG 2025-6 guidance,13 a medical device that is classified as high-risk under the EU MDR and also qualifies as an AI system will generally be treated as high-risk according to the EU AI Act, particularly where third-party conformity assessment by a notified body is required. However, as clarified in Section 1(4),13 the converse does not automatically hold: being high-risk as per the EU AI Act does not mean the product is high-risk under the EU MDR, and the requirements may differ depending on the intended use and regulatory pathway.

This interplay means regulatory professionals must carefully map obligations across both frameworks. Not all AI-enabled devices classified as high-risk under the EU MDR will be high-risk under the EU AI Act unless they meet specific conditions (such as being listed in Annex I or III of the act and undergoing notified body review), as shown in Figure 4. Conversely, some high-risk AI systems designated by the EU AI Act may not trigger the highest EU MDR classification or may not require the same conformity assessment process. For regulatory professionals, this makes careful mapping essential: each system must be assessed under both frameworks, with obligations aligned but not assumed to be identical merely because the term high‑risk appears in both frameworks.

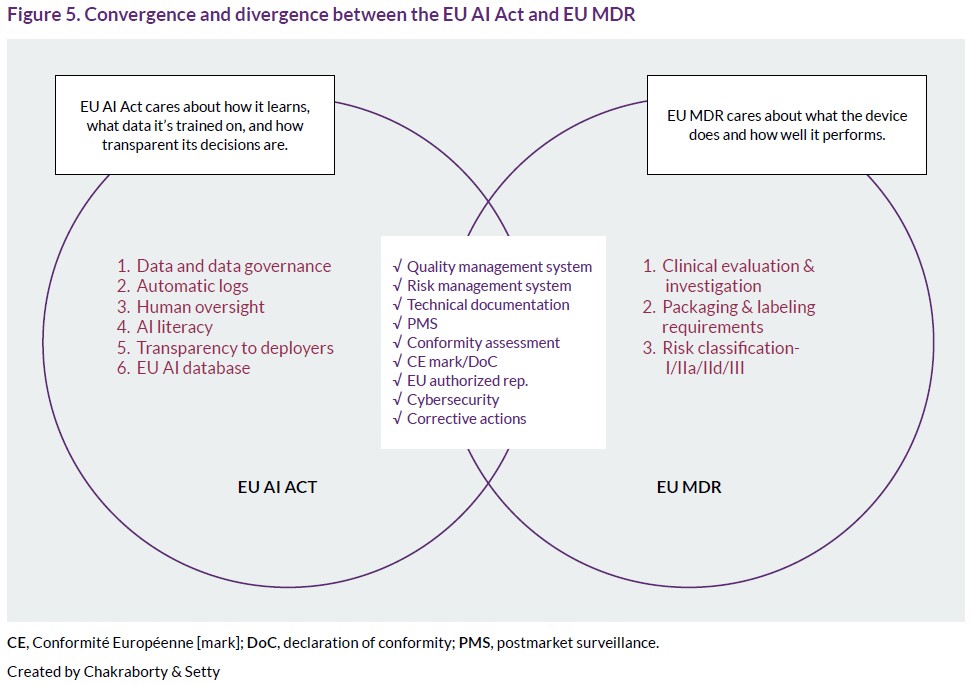

Convergence and divergence: EU AI Act and EU MDR

It is clear that the EU AI Act and the EU MDR are best understood as complementary rather than contradictory. At a high level, both the EU MDR and the EU AI Act are built on the same foundation: they aim to protect patients and users by ensuring that products and systems are safe, perform as intended, and are controlled throughout their lifecycle. For AI‑enabled medical devices, this means the EU AI Act does not replace the EU MDR but adds an extra regulatory layer that must be integrated into an already familiar framework. The result is a convergent structure with important points of divergence that regulatory professionals must understand and manage in a coordinated way (Figure 5).

Convergence: Shared foundations

Both the EU MDR and the EU AI Act follow a risk‑based approach, rely on CE marking, and require structured conformity assessment, technical documentation, and post‑market surveillance. Article 11(2) of the EU AI Act explicitly recognizes this alignment by allowing manufacturers of products covered by European Union harmonization legislation in Annex I (including the EU MDR) to prepare a single set of technical documentation and, by extension, a single declaration of conformity that demonstrates compliance with both frameworks.16 At the level of core obligations, there is substantial overlap. Both frameworks require:

- A documented quality management system, including design and change control, verification and validation, and control of suppliers and data;

- A lifecycle risk management process covering identification, evaluation, reduction, and monitoring of risks, with continuous, iterative review;

- Technical documentation that explains design, intended purpose, performance, risk controls, and benefit-risk rationale in a way that can be assessed by notified bodies and competent authorities; and

- Postmarket surveillance and vigilance procedures to actively collect and assess performance and incident data, triggering corrective and preventive actions when needed.

Section II of the MDCG 2025‑613 explicitly encourages manufacturers to integrate EU AI Act requirements into their existing EU MDR quality management, risk management, and documentation structures rather than building separate systems. This enables a unified compliance approach, allowing manufacturers to build one coherent regulatory spine rather than two parallel systems.13 AI‑specific topics, such as accuracy, robustness, and cybersecurity (Article 15 of the EU AI Act), can be embedded into existing EU MDR processes for design control, validation, and vigilance. Likewise, new obligations on logging, oversight, and data management can be addressed by extending existing quality management systems and postmarket surveillance (PMS) mechanisms, rather than creating entirely new structures.

Divergence: Additional AI‑specific obligations16

Despite this shared regulatory spine, the EU AI Act introduces additional obligations beyond the traditional medical device paradigm that must be explicitly addressed for high‑risk AI systems.

Data and data governance (Article 10 EU AI Act). The EU MDR focuses on clinical evidence and performance data, but does not prescribe in detail how training, validation, and test data for algorithms must be governed. By contrast, Article 10 of the EU AI Act requires structured data governance and management practices, including documented design choices, data collection methods, bias prevention, dataset representativeness and completeness, and alignment with other EU data laws, such as the GDPR.

Record‑keeping and automatic logging (Article 12). The EU AI Act requires high‑risk AI systems to implement logging capabilities that automatically record relevant events throughout the system’s lifecycle to support traceability, incident investigation, and postmarket monitoring. The EU MDR requires PMS and vigilance but does not mandate AI‑specific automatic logging at this level of granularity.

Expanded risk management and fundamental rights (Article 9). Under the EU MDR, risk management focuses on health and safety and on ensuring that residual risks are acceptable when weighed against benefits. Article 9 of the EU AI Act expands this scope to require a risk management system that also considers risks to fundamental rights, including discrimination and undue bias, and obliges providers to eliminate or reduce these risks to the extent technically feasible, not only to the extent possible under traditional medical‑device criteria.

Transparency and human oversight (Articles 13 and 14). The EU MDR focuses on labeling, instructions for use, and essential performance information for users. The EU AI Act adds explicit obligations to ensure that users (deployers) understand that they are interacting with AI, can understand the system’s intended purpose, limitations, and conditions of use, and are able to exercise meaningful human oversight. MDCG 2025‑6 emphasizes that explainability and human control become central design and documentation elements for medical device AI under this combined framework.

Accuracy, robustness, and cybersecurity (Article 15). While the EU MDR already requires manufacturers to address performance, reliability, and information security, Article 15 of the EU AI Act introduces AI‑specific expectations for accuracy, robustness, and cybersecurity across the lifecycle. This includes resilience against errors, misuse, data poisoning, adversarial manipulation, and degradation over time, considering both the AI model and datasets as well as the device’s context.

Registration and databases (Articles 49 and 60s). Under the EU MDR, device registration, certificates, and relevant economic operators are recorded in EUDAMED. The EU AI Act supplements this with an EU AI database for certain high‑risk AI systems (notably those listed in Annex III), increasing transparency on AI providers, system types, and risk classification. For some AI‑enabled technologies, this may result in obligations under both EUDAMED and the AI database, depending on how the system is classified.

Practical implications for manufacturers

For manufacturers of AI‑enabled medical devices, convergence offers an opportunity to streamline compliance by establishing one integrated system that satisfies both legal acts, using Article 11(2) of the EU AI Act to justify a unified technical documentation set and declaration. However, the divergence means that existing EU MDR systems must be expanded to address new obligations rather than simply reused.

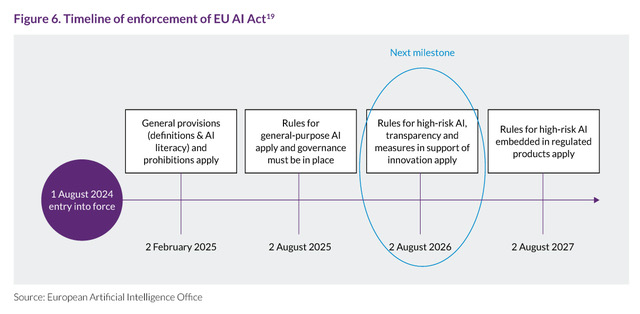

Timeline for enforcement and compliance

Having outlined how AI systems are classified and how regulatory requirements align with those categories, the next consideration is timing. Obligations do not take effect simultaneously. Instead, they surface in stages, with each stage bringing a different set of duties into effect (Figure 6). Under Article 113 of the EU AI Act, the regulation applies from 2 August 2026, with most substantive obligations for high‑risk AI systems beginning on that date. However, the regime enters into force in stages: certain general provisions and prohibitions apply earlier, and the rules for different categories of high‑risk AI systems come into effect at different times.

For manufacturers, a critical distinction lies between systems captured under Annex I (AI in products regulated by European Union harmonization legislation, such as the EU MDR and EU IVDR) and Annex III (stand‑alone high‑risk use cases), as elucidated in Article 113 of the EU AI Act. High‑risk AI systems falling under Annex III must comply from 2 August 2026, whereas high‑risk AI systems that are safety components of, or are themselves, medical devices or IVDs under Annex I must comply from 2 August 2027. Manufacturers that assume the later deadline without verifying whether any of their AI functions fall within Annex III risk entering the compliance period already non‑compliant.16

Conclusion

As this first article in the series concludes, one message is clear: the EU MDR/IVDR and the EU AI Act are not competing frameworks but complementary layers of a single regulatory system. Together, they reshape how risk, safety, and accountability are understood for AI-enabled medical technologies, extending attention from static devices to adaptive algorithms, data governance, and human oversight across the lifecycle. Rather than signaling a crisis, this evolution reflects the EU’s effort to keep regulatory practice aligned with rapidly changing technological capabilities.

For regulatory and quality professionals, the focus now shifts from understanding what the EU AI Act means to operationalizing it within existing structures. This article has examined scope, classification, and the conceptual interplay between the EU MDR and the AI Act, using examples to illustrate where boundaries blur and where obligations clearly diverge. The second article in this series will transition from principles to practice, exploring how existing international and European standards, combined with current EU MDR processes, can be leveraged to meet the AI Act's requirements for risk management, data governance, transparency, and postmarket governance.

AbbreviationsEU AI Act, EU Artificial Intelligence Act; EU IVDR, EU In Vitro Diagnostic Regulation; EU MDR, EU Medical Devices Regulation; EUDAMED, European Database on Medical Devices; GDPR, General Data Protection Regulation [EU]; MDCG, Medical Device Coordination Group; NLF, New Legislative Framework [EU]; PMS, postmarket surveillance.

About the authorsGeethapriya (Priya) Setty, MS, MBA, RAC, PMP, is a manager of global regulatory affairs at Hologic, specializing in regulatory strategy, regulatory intelligence, and digital health/AI compliance for high‑risk devices. She has more than eight years of regulatory affairs experience and over twenty years in healthcare, including prior work as a pediatric occupational therapist. Setty holds an MS from the University of Mumbai, India, and an MBA from St. Thomas University, Minnesota. She is a member of RAPS and board member of the Southern California Local Networking Group. She holds the RAC-Devices and can be reached at [email protected]

Attrayee Chakraborty, MS, MSc, CQSP, is a quality systems engineer at Analog Devices. She has experience in quality systems and regulatory affairs in medical devices, with a focus on quality management systems and AI regulation. Chakraborty has a Master of Science degree in Regulatory Affairs from Northeastern University, a Master of Science degree from the University of Calcutta, India, and holds the Certified Quality Systems Professional certification. She is a RAPS member and recipient of the RAPS Rising Star Award (2025). She can be reached at [email protected]

Disclaimer This article represents the views and opinions solely of the authors and does not constitute, nor should it be construed as, legal or professional advice. The authors’ affiliation with any companies does not imply any endorsement, sponsorship, or responsibility by these entities for the content herein.

Acknowledgment This article was adapted from a presentation by the authors at the 2025 Convergence in Pittsburgh, PA, from 7-9 October. The authors thank John Brandstetter, Cécile van der Heijden, Aleksandr Tiulkanov, Jay Vaishnav, Sean Smith, Rita Ahmed, and Sue Dahlquist for editorial support and feedback during the development stages of the original presentation and this article.

Citation Chakraborty A, Setty G. Navigating convergence and divergence between the EU MDR and EU AI Act. RAPS Journal of Regulatory Affairs. 2026;1(2):4-17. Published online 6 March 2026. https://www.raps.org/resource/navigating-convergence-and-divergence-between-the.html

ReferencesAll references were last checked and verified on 16 February 2026.

- Xu Y, et al. Artificial intelligence: a powerful paradigm for scientific research. Innovation. Published 28 October 2021. Accessed 15 December 2025. https://doi.org/10.1016/j.xinn.2021.100179

- Silcox C, et al. The potential for artificial intelligence to transform healthcare: Perspectives from international health leaders. NPJ Digit Med. Published 9 April 2024. Accessed 15 December 2025. https://www.nature.com/articles/s41746-024-01097-6

- Chustecki M. Benefits and risks of AI in health care: Narrative review. Interact J Med Res. Published 18 November 2024. Accessed 15 December 2025. https://pmc.ncbi.nlm.nih.gov/articles/PMC11612599

- Rajkomar A, et al. ensuring fairness in machine learning to advance health equityNat Med. Published 8 April 2024. Accessed 15 December 2025. https://pubmed.ncbi.nlm.nih.gov/30508424/

- Liu J. ChatGPT: Perspectives from human-computer interaction and psychology. Front Artif Intell. Published 18 June 2024. Accessed 15 December 2025. https://pmc.ncbi.nlm.nih.gov/articles/PMC11217544/

- Aboy M, et al. Navigating the EU AI Act: Implications for regulated digital medical products. NPJ Digit Med. Published 6 September 2024. Accessed 15 December 2025. https://www.nature.com/articles/s41746-024-01232-3

- European Commission. New legislative framework. Not dated. Accessed 15 December 2025. https://single-market-economy.ec.europa.eu/single-market/goods/new-legislative-framework_en

- Hacker P. The AI Act between digital and sectoral regulations. Bertelsmann Stiftung. Published December 2024. Accessed 14 December 2025. https://www.bertelsmann-stiftung.de/fileadmin/files/user_upload/The_AI_Act_between_Digital_and_Sectoral_Regulations__2024_en.pdf

- Craven, J. Convergence: Rebuilding public trust in science with empathy, transparency. Regulatory Focus. Published 6 October 2025. Accessed 15 December 2025. https://www.raps.org/news-and-articles/news-articles/2025/10/2025-convergence-plenary

- Food and Drug Administration. Factors to consider when making benefit-risk determinations in medical device premarket approval and de novo classifications. Issued 30 August 2019. Accessed 15 December 2025. https://www.fda.gov/media/99769/download

- Abulibdeh R, et al. The illusion of safety: A report to the FDA on AI healthcare product approvals. PLOS Digit Health. Published 5 June 2025. Accessed 15 December 2025. https://pmc.ncbi.nlm.nih.gov/articles/PMC12140231/

- European Commission. AI Act. Not dated. Accessed 15 December 2025. https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

- AIB 2025-1 – MDCG 2025-6 – Interplay between the Medical Devices Regulation (MDR) & In vitro Diagnostic Medical Devices Regulation (IVDR) and the Artificial Intelligence Act (AIA) [guidance]. Dated June 2025. Accessed 15 December 2025. https://health.ec.europa.eu/document/download/b78a17d7-e3cd-4943-851d-e02a2f22bbb4_en?filename=mdcg_2025-6_en.pdf

- Future of Life Institute. Historic timeline. Not dated. Accessed 15 December 2025. https://artificialintelligenceact.eu/developments/

- European Parliament. EU AI Act: First regulation on artificial intelligence. Published 8 June 2023. Accessed 15 December 2025. https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence

- Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence and amending Regulations (EC) No 300/2008, (EU) No 167/2013, (EU) No 168/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144 and Directives 2014/90/EU, (EU) 2016/797 and (EU) 2020/1828 (Artificial Intelligence Act). Accessed 15 December 2025. https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=OJ:L_202401689

- Renner C, et al. The EU AI Act is here – With extraterritorial reach. Morgan Lewis. Published 26 July 2024. Accessed 15 December 2025. https://www.morganlewis.com/pubs/2024/07/the-eu-artificial-intelligence-act-is-here-with-extraterritorial-reach

- Moore L, et al. A practical guide to the extraterritorial reach of the AI Act. William Fry. Dated 23 July 2024. Accessed 15 December 2025. https://www.williamfry.com/knowledge/a-practical-guide-to-the-extraterritorial-reach-of-the-ai-act/

- Data Europa Academy. Next steps to compliance: Preparing for the EU AI Act [webinar slides]. Dated 18 July 2025. Accessed 15 December 2025. https://data.europa.eu/sites/default/files/course/webinar-ai-act-slides.pdf